NPS vs. CSAT: Key differences and when to use them

Compare NPS vs. CSAT, then learn how to calculate these metrics, when to use them, and best practices for measuring and improving the customer experience.

Customer feedback helps you clearly understand different users’ experience with your company and product. Two of the most common metrics, net promoter score (NPS) and customer satisfaction score (CSAT), check on customers’ long-term feelings and individual interactions with your team.

When they’re used correctly, these metrics both show where your customer success strategy might need more attention. Knowing the difference between CSAT and NPS sets your expectations and guides how you interpret results.

This guide compares NPS versus CSAT and discusses when each metric fits, and how you can use them together.

What are CSAT and NPS metrics?

CSAT and NPS are customer success metrics that capture customer satisfaction from different angles. CSAT measures satisfaction after a specific interaction, and NPS asks how likely someone is to recommend your product to others.

How NPS and CSAT are calculated

NPS and CSAT use short customer feedback surveys to collect data, but they calculate what that feedback means in different ways.

NPS calculations

NPS asks customers a single question: “How likely are you to recommend our product to a colleague or peer?”

Respondents answer on a scale from 0 to 10, where 0 means “very unlikely” and 10 means “very likely.” Responses are sorted into three groups:

- Promoters (9 or 10): Customers who have very strong and positive feelings about your company. They’re most likely to become (or already are) advocates.

- Passives (7 or 8): Customers who are satisfied but may leave for a competitor.

- Detractors (0 to 6): Customers who are unhappy and can damage your brand through negative word-of-mouth.

Internally, NPS is measured on a -100 to 100 scale. This is because NPS is calculated by subtracting the percentage of detractors from the percentage of promoters:

% of promoters - % of detractors = NPS

Let’s say you had 100 survey respondents over a quarter. 60 of them answered with a 9 or 10 (promoters), 20 of them answered with a 7 or 8 (passives), and 20 of them answered with a 6 or lower (detractors). Since 60% of your respondents were promoters and 20% were detractors, your NPS score would be 40.

CSAT calculations

CSAT surveys have customers rate a specific interaction, such as a support conversation or resolved ticket. A typical CSAT question might ask, “How satisfied were you with this interaction?”

Responses usually fall on a scale of 1 to 5, with 1 being “very unsatisfied” and 5 meaning “very satisfied.”

CSAT is calculated by dividing the number of positive responses by the total number of responses, then converting that number into a percentage:

(Number of satisfied responses ÷ total responses) x 100% = CSAT score (%)

Say you collected 150 CSAT responses over a quarter. 54 of those responses rated their satisfaction a 4, and 66 a 5. Your CSAT calculation would look like this:

([54 + 66] ÷ 150) x 100% = 80%

Main differences between NPS and CSAT

Here’s a quick summary of what NPS and CSAT measure and when it makes sense to use each one.

When to use NPS and CSAT

Send an NPS survey to get a sense of your overall customer sentiment. It works best when you need a high-level view of how customers feel about your product over an extended period. Many B2B teams use NPS quarterly or biannually to track shifts in trust and identify trends across account segments.

Say you’ve changed how often your customer success managers check in with accounts who’ve renewed their contracts once. Running an NPS six months after the change can show if the customers feel like they’re having a better (or worse) experience with your company after receiving fewer calls. However, it doesn’t show why or what they’d like instead.

Use CSAT when you want to evaluate specific interactions. Getting CSAT scores works especially well after moments like onboarding milestones or completed tickets. A support team might use CSAT, for instance, to understand if a new follow-through strategy changed satisfaction for recent tickets about API integrations. Because the feedback is tied to a single touchpoint, it helps teams pinpoint where expectations were met or missed.

Best practices for using NPS and CSAT effectively

Getting the most from NPS and CSAT feedback depends on how you run surveys, interpret results, and act on what you learn.

Here’s how to use NPS feedback most effectively:

- Run surveys regularly. NPS is designed for check-ins on a predictable schedule, such as quarterly or biannual surveys. This cadence creates distance from day-to-day issues and makes it easier to spot broader sentiment trends.

- Track trends over time. Individual NPS scores can fluctuate for many reasons, including things unrelated to your team. What matters more is the direction scores move over several survey cycles. For example, a gradual score decline over multiple quarters may indicate growing friction, even if no score looks alarming on its own.

- Pair scores with open-ended responses. One number rarely explains everything. Open-ended responses give you context by showing what customers actually care about and where they see gaps in your support. This feedback helps you interpret their scores and what they reflect, whether it’s product limitations, communication issues, or unmet expectations.

- Segment results to add context. Looking at NPS in aggregate can hide meaningful differences. Segmenting results by account type, size, or lifecycle stage helps you see who’s satisfied and who may need more care.

- Share insights across teams. Bringing product, support, and account management teams into the conversation helps customer input influence product and workflow-level decisions.

These practices can help you get more from CSAT scores:

- Send surveys immediately after meaningful interactions. CSAT survey results are more accurate when the experience is still fresh. Sending surveys right after a support conversation increases the chance that responses reflect what actually happened.

- Ask one focused question. Keeping CSAT surveys simple improves response quality. For example, asking “How satisfied were you with the support you received?” is clearer and more actionable than “Did you like how quickly our support team responded, and did they help you in an accurate and friendly way?”

- Review CSAT alongside customer context. Looking at a CSAT score next to the account’s recent tickets and conversations can help your team understand what went right and where they have room to improve.

- Look for patterns before reacting. Single low scores might reflect one-off frustrations. Repeated feedback tied to similar issues or workflows is more likely to point to a process problem that needs to be addressed.

- Follow up on feedback. CSAT responses can reveal confusing documentation or incomplete resolutions. Prompt follow-up shows customers you take their input seriously, and letting them know what you’ve done to improve the process prevents smaller issues from snowballing — and makes the customer feel heard.

Using CSAT and NPS together

CSAT shows how customers feel about individual interactions, and NPS shows how those interactions add up. When you review both metrics, you can connect day-to-day experiences with long-term sentiment for a more complete picture of account health.

For example, consistently high CSAT scores after support conversations can explain gradually improving NPS scores. Bigger dips in NPS client satisfaction can signal when it’s time to look more closely at interaction-level feedback.

Regularly reviewing both metrics helps your support team move beyond individual numbers to understand how specific exchanges shape relationships — and your company’s long-term success.

Turn customer feedback into clearer direction with Pylon

NPS and CSAT give you different views of the customer experience. When you choose the right metric for the situation and review results with context, you’ll have a better idea of where to focus your efforts. It’s important to keep all these scores in one place so you can go back to them over time and adjust support accordingly.

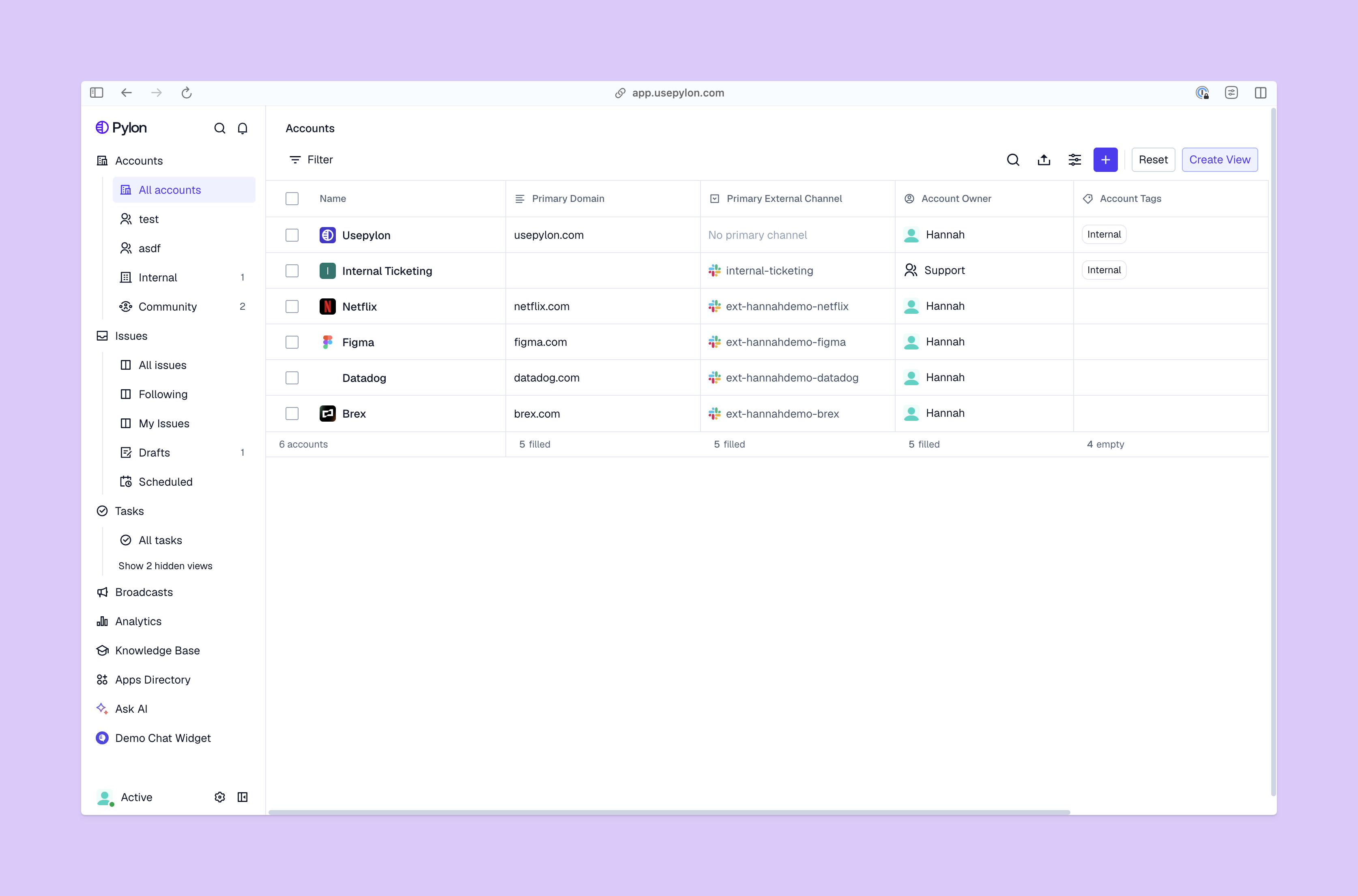

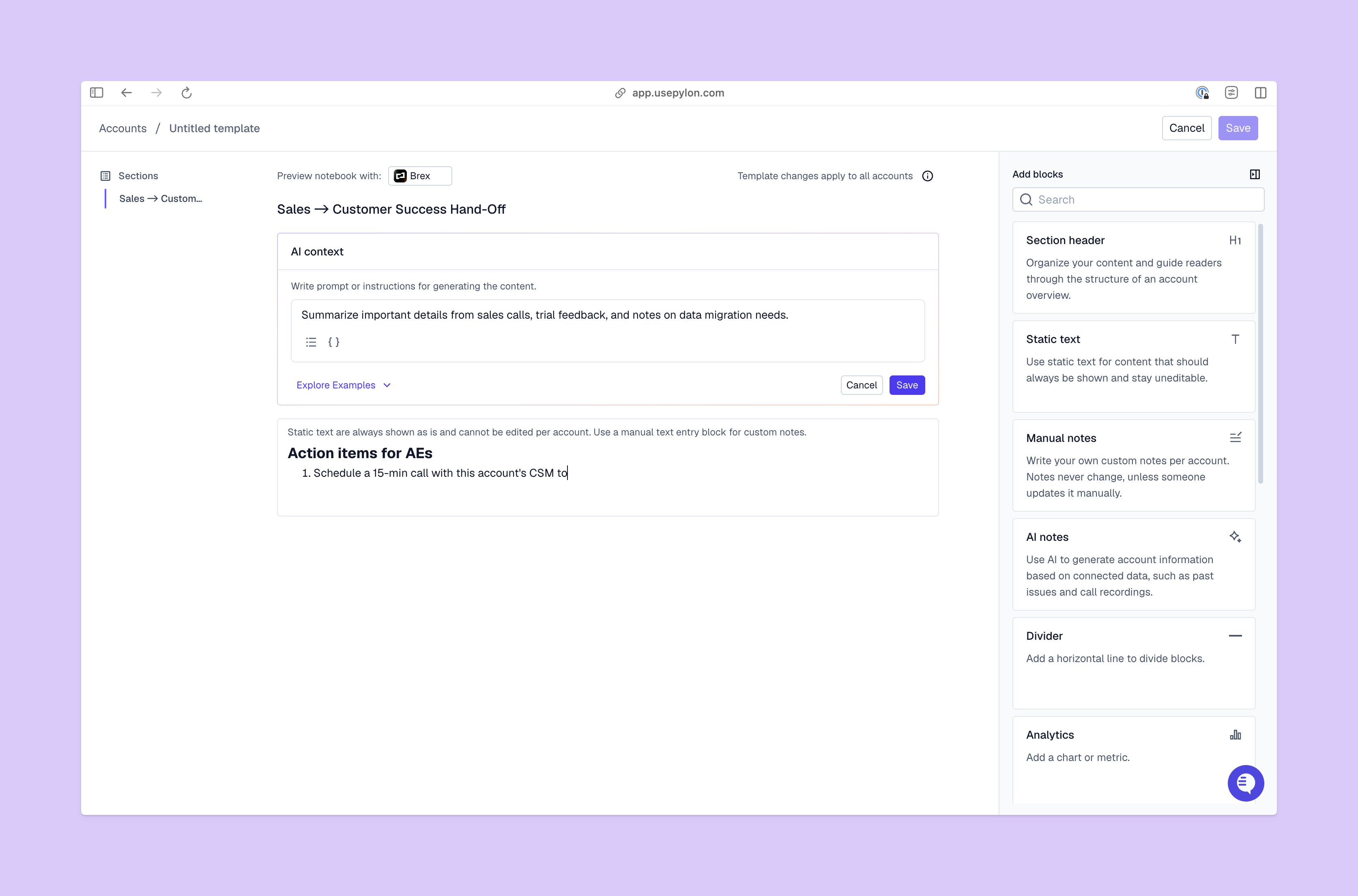

Pylon is the modern B2B support platform that offers true omnichannel support across Slack, Teams, email, chat, ticket forms, and more. Our AI Agents and Assistants automate busywork and reduce response times. Plus, with Account Intelligence that unifies scattered customer signals to calculate health scores and identify churn risk, we're built for customer success at scale.

FAQ

What are the three NPS results categories?

The net promoter score divides customers into promoters (9-10), who express very positive feedback; passives (7-8), who are satisfied but vulnerable to competitors; and detractors (0-6), who are unhappy and can damage your brand through negative word-of-mouth.

What’s a good NPS score?

Generally, any score above 0 is considered good. However, for 2026, a score above 50 is considered excellent, while 70+ is world-class. Benchmarks vary by industry.

What’s a perfect CSAT score?

A perfect CSAT score is 100%, meaning every respondent selected the highest satisfaction rating. In practice, a score between 70% and 85% is considered good, with anything above 85% being exceptional and difficult to sustain.

What are some common NPS mistakes?

Common pitfalls include focusing only on the number instead of qualitative feedback, failing to segment your data, and ignoring detractor responses. Additionally, using leading language or colors in the survey can bias results and reduce data accuracy.